|

10/14/2021 0 Comments Docker For Mac Tensorflow

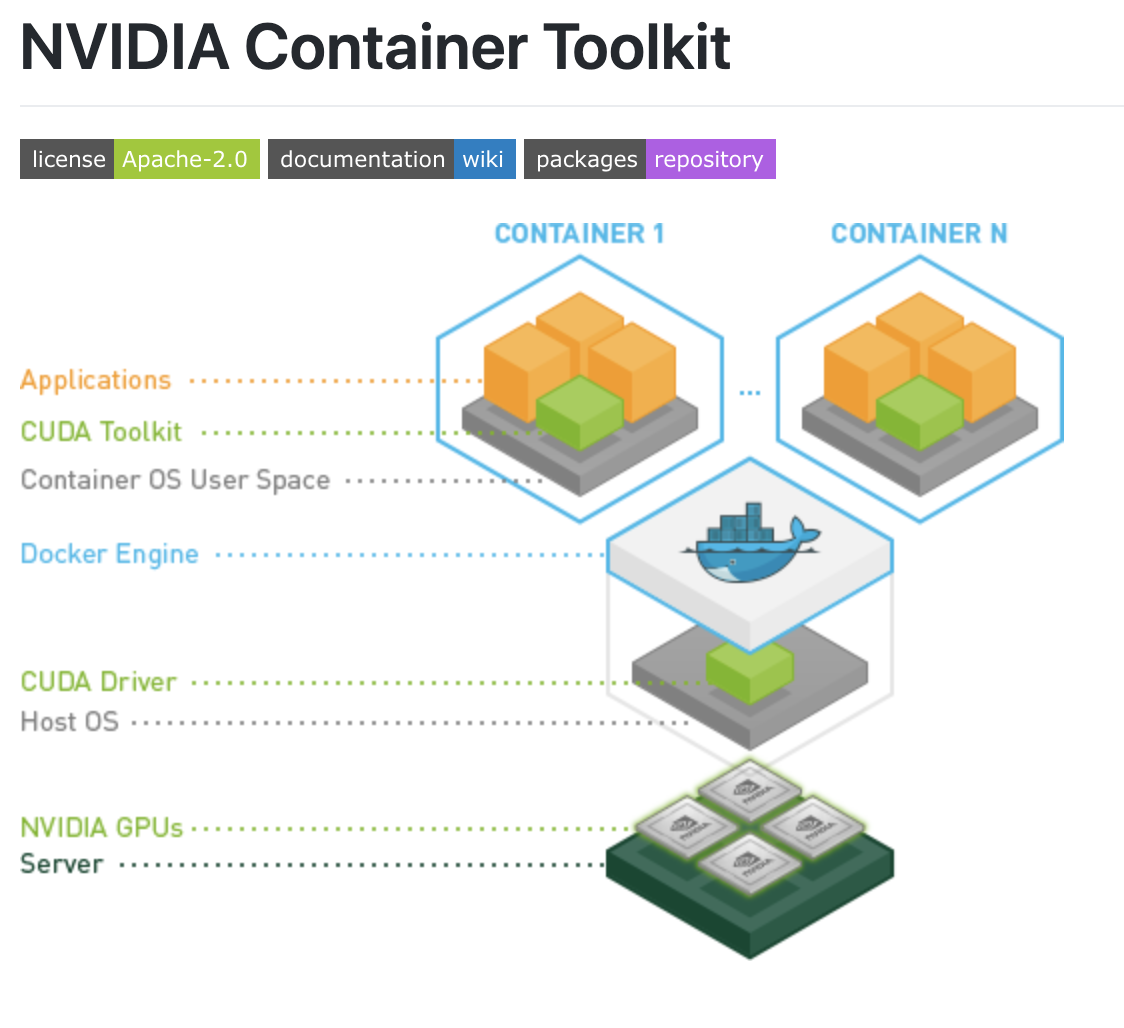

Use Docker and Kubernetes to scale your deployment, isolate user processes.Global companies like Amazon, Microsoft, Google, Apple, and Facebook have hundreds of ML models in production. Yep, Explore that same data with pandas, scikit-learn, ggplot2, TensorFlow. Let’s see how easy it is to launch a more complex application like TensorFlow which requires Numpy, Bazel and myriad other dependencies. You can install it on your host computer as follows, on Linux, on Mac.Google provides pre-built Docker images of TensorFlow through their public container repository, and Microsoft provides a Dockerfile for CNTK that you can build yourself. Build Get a head start on your coding by leveraging Docker images to efficiently develop your own unique applications on Windows and Mac.Machine learning (ML) has the potential to greatly improve businesses, but this can only happen when models are put in production and users can interact with them.Then well show you how to run a TensorFlow Lite model using the accelerator. Docker’s comprehensive end to end platform includes UIs, CLIs, APIs and security that are engineered to work together across the entire application delivery lifecycle.

Endpoint here can be a direct user or other software.”In this tutorial, I’m going to show you how to serve ML models using Tensorflow Serving, an efficient, flexible, high-performance serving system for machine learning models, designed for production environments. See the process to install TensorFlow Virtual environment, Pip, Docker.“Model serving is simply the exposure of a trained model so that it can be accessed by an endpoint. On windows,Ubuntu and MacOS, install TensorFlow using Anaconda on Ubuntu,PIP. Endpoint here can be a direct user or other software.”In this tutorial, I’m going to show you how to serve ML models using Tensorflow Serving, an efficient, flexible, high-performance serving system for machine learning models, designed for production environments. See the process to install TensorFlow Virtual environment, Pip, Docker.“Model serving is simply the exposure of a trained model so that it can be accessed by an endpoint. On windows,Ubuntu and MacOS, install TensorFlow using Anaconda on Ubuntu,PIP.  In short, model loaders provide efficient functions to load and unload a model (servable) from the source. The model loaders provide the functionality to load models from a given source independent of model type, the data type of even the use case. Once a model is loaded, the next component — model loader — is notified. The model source provides plugins and functionality to help you load models or in TF Serving terms servables from numerous locations (e.g GCS or AWS S3 bucket). This high-level architecture shows the important components that make up TF Serving. In short, model loaders provide efficient functions to load and unload a model (servable) from the source. The model loaders provide the functionality to load models from a given source independent of model type, the data type of even the use case. Once a model is loaded, the next component — model loader — is notified. The model source provides plugins and functionality to help you load models or in TF Serving terms servables from numerous locations (e.g GCS or AWS S3 bucket). This high-level architecture shows the important components that make up TF Serving.

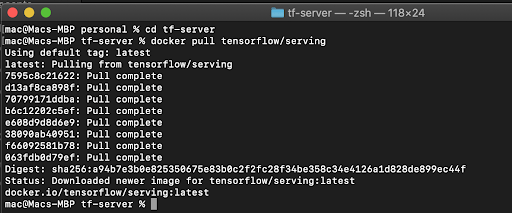

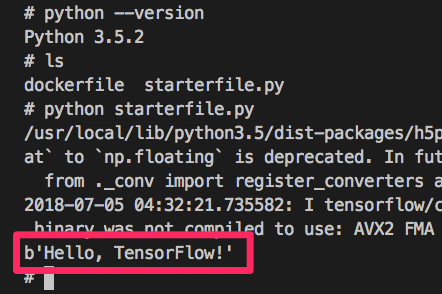

Docker Tensorflow How To Run AYou’ll learn the difference between these two in later parts of this tutorial.For more in-depth details of the TS architecture, visit the official guide below:After downloading and install Docker via the respective installers, run the command below in a terminal/command prompt to confirm that Docker has been successfully installed: docker run hello-worldWhich should output : Unable to find image ‘hello-world:latest’ locallyDigest: sha256: 4cf9c47f86df71d48364001ede3a4fcd85ae80ce02ebad74156906caff5378bcStatus: Downloaded newer image for hello-world:latestThis message shows that your installation appears to be working correctly.To generate this message, Docker took the following steps:1. TF serving provides two important types of Servable handler– REST AND gRPC. The Servable handler provides the necessary APIs and interfaces for communicating with TF serving. That is, it manages when model updates are made, which version of models to use for inference, the rules and policies for inference and so on. Then, you reshaped the data to use a single channel (black & white) and then normalize by dividing with 1/255.0.Next, you create a simple Convolutional Neural Network (CNN) with 9 classes at the output because you’re predicting for 10 classes (0–9). I call mine tf-server, and I have it open in VsCode.Step 2: In the project folder, create a new script called model.py, and paste the code below: import matplotlib.pyplot as pltFrom tensorflow.keras.datasets.mnist import load_dataFrom tensorflow.keras.layers import DenseFrom tensorflow.keras.layers import Conv2DFrom tensorflow.keras.layers import MaxPool2DFrom tensorflow.keras.layers import FlattenFrom tensorflow.keras.layers import Dropout(x_train, y_train), (x_test, y_test) = load_data()Print(f 'Train: X=')X_train = x_train.reshape((x_train.shape, x_train.shape, x_train.shape, 1))X_test = x_test.reshape((x_test.shape, x_test.shape, x_test.shape, 1))X_train = x_train.astype( 'float32') / 255.0X_test = x_test.astype( 'float32') / 255.0 # set input image shapeModel.add(Conv2D( 64, ( 3, 3), activation= 'relu', input_shape=input_shape))Model.add(Conv2D( 32, ( 3, 3), activation= 'relu'))Model.add(Dense( 50, activation= 'relu'))Model.add(Dense(n_classes, activation= 'softmax'))Model.compile(optimizer= 'adam', loss= 'sparse_categorical_crossentropy', metrics=)Model.fit(x_train, y_train, epochs= 10, batch_size= 128, verbose= 1)Loss, acc = model.evaluate(x_test, y_test, verbose= 0)Model.save(filepath=file_path, save_format= 'tf')The code above is pretty straight forward, first, you import the necessary packages you’ll be using and also load the MNIST dataset prepackaged with Tensorflow. Installing Tensorflow ServingNow that you have Docker properly installed, you’re going to use it to download TF Serving.Below, I’ll walk you through loading the dataset, and then build a simple deep learning classifier.Step 1: Create a new project directory and open it in your code editor. The Docker daemon streamed that output to the Docker client, which sent it to your terminal.If you get the output above, then Docker has been successfully installed on your system. The Docker daemon created a new container from that image which runs the executable that produces the output you are currently reading.4. The Docker daemon pulled the “hello-world” image from the Docker Hub. Best people photo makeup apps for macThe payloads of the requests are mostly encoded in JSON format REST clients communicate with the server using the standard HTTP methods like GET, POST, DELETE, etc. It defines a communication style on how clients communicate with web services. REST is a communication “protocol” used by web applications. After fitting, you evaluate it on the test data, print the accuracy, and finally save the model. Torrent dvd full audio latinoIt should also be noted that Tensorflow Serving will provision both Endpoints when you run it, so you do not need to worry about extra configuration and setup. GRPC provides low- latency communication and smaller payloads than REST and is preferred when working with extremely large files during inference.In this tutorial, you’ll use a REST Endpoint, since it is easier to use and inspect. The standard data format used with gRPC is called the protocol buffer.

0 Comments

Leave a Reply. |

AuthorJimmy ArchivesCategories |

RSS Feed

RSS Feed